In February, a fascinating article in The New York Times examined what happens inside the brain of a concert pianist while performing. The story followed the pianist and researcher Nicolas Namoradze, whose unusual project pairs live piano performance with neuroscience. By recording brain activity during concerts, Namoradze and his collaborators have begun to visualize something that has long been invisible: the neurological fireworks that occur when a musician plays.

The results are striking. Brain imaging suggests that playing the piano engages not just isolated regions of the brain but wide networks working simultaneously—motor systems controlling the hands, auditory centers processing sound, cognitive systems interpreting structure and pattern, and emotional circuits shaping interpretation. Observers describe the phenomenon as a “full-brain workout,” a cascade of neural activity occurring in real time as the performer translates music from page to motion to sound.

Reading the article, it is difficult not to notice that the same neurological description could apply to another profession—one that unfolds quietly every day in courtrooms across the country.

Realtime court reporting.

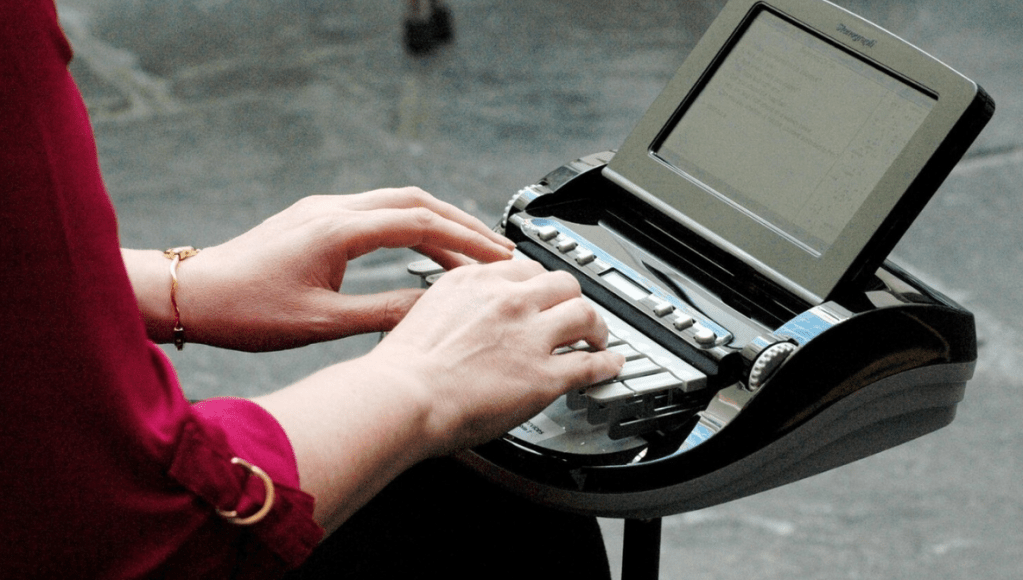

Like concert pianists, stenographic court reporters sit at an instrument with two hands poised above a keyboard. Their performance unfolds live, without rehearsal, and under pressure. And like pianists, they convert a rapidly unfolding stream of information into coordinated finger movements that must occur with extraordinary speed and precision.

Yet the public rarely recognizes the similarity.

Namoradze’s research offers a revealing lens through which to view the cognitive demands of stenographic writing. The pianist’s brain, researchers found, activates broad networks when performing music. The same integration of systems—motor control, auditory processing, pattern recognition, and prediction—is precisely what a realtime court reporter uses while capturing speech verbatim.

In other words, writing realtime shorthand may be neurologically closer to playing a concerto than to typing on a keyboard.

A Brain Performing at Full Capacity

Namoradze’s “neurorecital” experiments show that piano performance activates multiple regions of the brain simultaneously. Motor regions control finger movement across the keyboard. Auditory regions analyze the sound produced. Cognitive centers track structure and anticipate what comes next in the musical phrase.

The same integration occurs when a court reporter writes realtime.

First, the auditory cortex processes spoken language as it arrives. Courtroom speech is rarely neat or predictable. Witnesses hesitate, attorneys interrupt, and overlapping dialogue can occur. The reporter’s brain must quickly separate words from noise, accents, and pacing.

Next, language-processing centers interpret meaning and grammar. Speech is not merely sound; it carries structure. The brain must identify subjects, verbs, clauses, and context even as the sentence unfolds.

Then comes translation.

Court reporters do not type English letter by letter. Instead, they convert speech into phonetic shorthand using a stenotype machine—a keyboard designed to record entire syllables or words with a single chorded stroke. The brain must rapidly select the correct shorthand patterns while continuing to listen to the speaker.

At the same moment, the motor cortex directs the reporter’s fingers to execute those strokes with split-second precision.

And all of this must happen continuously, hundreds of times per minute.

The Music of Language

The deeper comparison between piano performance and realtime stenography lies in pattern recognition.

Music is fundamentally structured. Chords resolve, rhythms repeat, and melodies follow predictable arcs. Expert musicians learn to anticipate these patterns long before the next note arrives.

Language works the same way.

Experienced court reporters develop an extraordinary sensitivity to linguistic patterns. Legal phrasing is particularly repetitive. A lawyer may begin a question with “Doctor, within a reasonable degree of medical probability…” and the reporter’s brain instantly recognizes the structure.

That recognition allows the reporter to prepare the shorthand strokes before the sentence finishes.

This predictive ability is what allows realtime writers to keep pace with speech approaching 250 or even 300 words per minute. Like musicians anticipating the next measure of a score, reporters anticipate the direction of language itself.

In both cases, prediction transforms reaction into performance.

The Role of Motor Memory

One of the most remarkable discoveries in neuroscience is the power of motor memory—the brain’s ability to store complex movement patterns so they can be executed automatically.

For pianists, this means that years of practice transform difficult passages into ingrained motor programs. A musician does not consciously think about each finger movement during performance; the body already knows the sequence.

Court reporters rely on the same neurological phenomenon.

A stenographic stroke is not a single keypress. It is a chord, often involving multiple fingers striking keys simultaneously. Thousands of these chord patterns must be memorized during training.

Over time, they become automatic.

When an experienced reporter hears a word like “objection,” the brain does not consciously assemble the letters. Instead, it retrieves the motor program associated with the shorthand stroke and sends it directly to the hands.

The result is a fluid motion—one chord, one word.

In this sense, stenography resembles music even more closely than typing. It is a form of muscle-memory performance driven by auditory cues.

Flow: The State of Performance

Musicians often describe entering a mental state known as “flow,” a condition of intense focus in which performance seems effortless. Psychologists studying expert performers have found that during flow states, certain regions of the brain associated with self-monitoring become less active while networks responsible for motor coordination and pattern recognition become more dominant.

Court reporters describe the same experience.

During fast testimony, reporters frequently say they stop consciously thinking about each word. Listening and writing merge into a single continuous action. The hands move almost automatically while the brain tracks meaning and context.

In that moment, the reporter is not merely typing.

They are performing.

A Hidden Cognitive Virtuosity

Namoradze’s experiments highlight something that neuroscientists have long suspected: activities that appear mechanical from the outside can involve extraordinary mental complexity.

Playing the piano is one of them.

Realtime court reporting is another.

Both require the integration of multiple cognitive systems—auditory processing, linguistic interpretation, motor execution, working memory, and prediction. Both involve instruments that translate human expression into structured output. And both demand years of specialized training before mastery is possible.

Yet while the concert pianist’s virtuosity is celebrated on stage, the virtuosity of realtime writing often goes unnoticed.

In the courtroom, the court reporter sits quietly to the side, hands moving over a machine few people understand. The performance is silent, invisible, and easily overlooked.

But beneath those quiet keystrokes lies a neurological symphony.

The Instrument of the Mind

One of the most intriguing ideas emerging from Namoradze’s research is that the true instrument of musical performance is not the piano.

It is the brain.

The keyboard merely translates the brain’s activity into sound.

The same could be said of the stenotype machine.

To an observer, the machine appears to produce the written record. But in reality, the instrument is only the interface. The real work occurs inside the reporter’s mind—where language, sound, memory, and motion converge in real time.

Speech enters the ear.

Meaning forms in the brain.

Fingers move across the keyboard.

And within milliseconds, spoken words become permanent text.

The Unseen Performance

Namoradze’s neurorecital project allows audiences to watch a pianist’s brain while music unfolds. It reveals the invisible complexity behind an act that might otherwise appear effortless.

If a similar experiment were conducted in a courtroom—if sensors tracked the brain activity of a realtime court reporter during testimony—the results would likely be just as dramatic.

Neural networks would flare with activity as the reporter listened, predicted, translated, and wrote.

A quiet figure at the edge of the courtroom would be revealed as something else entirely: a live performer translating language into record at the speed of conversation.

And like the pianist on stage, the instrument would only be half the story.

The real performance would be happening inside the mind.